A change in leadership, employees with backgrounds in humanities, and a switch so a “slower, smaller” approach – this whistleblower darling is on a crusade to save lives by saving Facebook.

About 10,000 people were packed into the Altice Arena in Lisbon, Portugal on opening night of the Web Summit. It was one of the first tech gatherings this size since the outset of the pandemic. And Web Summit’s CEO, Paddy Cosgove, never one to shy away from thorny issues, was going to make a field day of it.

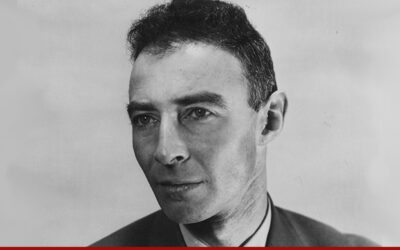

The opening speaker, no CEO or tech titan, was instead a young, genuine, and even unassuming blond woman who looked like she was plucked from an Iowa cornfield. And in an instant, Frances Haugen became the trending topic and darling of Web Summit.

Haugen (who really is from Iowa) has been thrust into the spotlight as the Facebook whistleblower. The former Facebook employee left her job as a product manager in May, taking thousands of internal documents with her.

And then she wrestled with how to do the right thing with the information she had, she told the audience. It was information that paints a sad picture of a company that has repeatedly valued profits over ethical business, sown discord, and created unhealthy dependencies.

On stage, to her right, sat Libby Lu, the CEO of Whistleblower Aid, a powerful organization that choose to represent Haugen only after a careful and laborious vetting process that looked at everything from her motives to her evidence. Now, with her as their client, the group coaches her, covers her legal fees, and even offers bodyguards and protection. Liu said Whistleblower Aid looks for a “strong sense of conscience, and an inability to tolerate wrong” before taking on a client. “They should not need to be torn between their careers and what’s right.”

Haugen is a whistleblower for our time. She is the “every girl” who worked hard and had the dream of working at Facebook. Within moments on stage she told the audience that her mother is a priest, one she turned to often for advice and counsel before deciding to come forward. Her mother, she said, told her that “every human being deserves the dignity of the truth.”

“There’s been a pattern of behavior at Facebook where they have consistently prioritized their own profits over our general safety,” said Haugen. Facebook, she said, “has tried to reduce the argument to a false choice between censorship and free speech.” But in fact, she explained, it’s the algorithmic decisions Facebook runs its business on that amplify political discord, and spread misinformation, and contribute to addictive, unhealthy behaviors. The engagements Facebook creates are dangerous because they are based on a megaphone effect in which the most volatile posts are the most amplified.

Haugen has no shortage of remedies for what ails Facebook. She gives props to Twitter and Google, which she says more deftly and more transparently manage content moderation. “We need to make it slower and smaller, not bigger and faster,” she said of Facebook, explaining that smaller groups of family and friends are easier to moderate. She argued that computer scientists need to have a moral compass, and said that happens best when they have a background in humanities as well as coding. And certainly algorithms can be bettered, to change the dynamic of what information is presented to whom.

Finally, she said, “I’m a proponent of corporate governance,” reminding the audience that Mark Zuckerberg is the Chairman, CEO, and Founder of the company, holding well over half of the voting shares. Things are “unlikely to change if that system remains,” she said.

“Maybe it’s a chance for someone else to take the reins. Facebook would be stronger with someone who’s willing to focus on safety,” she went on. She rued the fact that Facebook just announced hiring 10,000 engineers to build out its new Meta product, when that many engineers could have been deployed to fix Facebook’s obvious current problems. Though the company has publicly pushed back, declaring that her documents were a “cherrypicked” sample taken out of context, Haugen replied that she welcomes them to release more documents to the public.

At Web Summit, Facebook had ample opportunity to respond, but after Haugen’s authentically empathetic and smart delivery, its messages rang a bit hollow. Nick Clegg, who appeared at the conference via a Livestream, said, “There are always two sides to a story,” noting that Facebook’s artificial intelligence systems study the prevalence of hate speech on platforms. “Out of ten billion views of content, five might include hate speech – that’s 0.05%,” he said.

Chris Cox, one of Facebook’s earliest employees, now head of product and a close friend of Zuckerberg, also appeared on stage via Livestream, ostensibly to showcase the new Meta agenda. But he offered these words in response to questions about Haugen’s comments: “It’s been a tough period for the company. “He added that he welcomed “difficult conversations” around Facebook’s future.

The media, lawmakers and the Web Summit audience were enamored by Haugen and her message, which came peppered with wonderfully genuine, demurring comments like, “I don’t like to be the center of attention.”

But she came across as a force of nature when she said “Facebook would be stronger with someone who’s willing to focus on safety.” She is committed to a crusade she believes is fundamental to saving lives. What makes her not afraid, she said, is that “I genuinely believe that there are a million, or maybe 10 million lives on the line in the next 20 years, and compared to that, nothing really feels like a real consequence.”

“I have faith that Facebook will change.”

READ MORE: https://techonomy.com/frances-haugen-ive-come-not-to-bury-facebook-but-to-save-it/